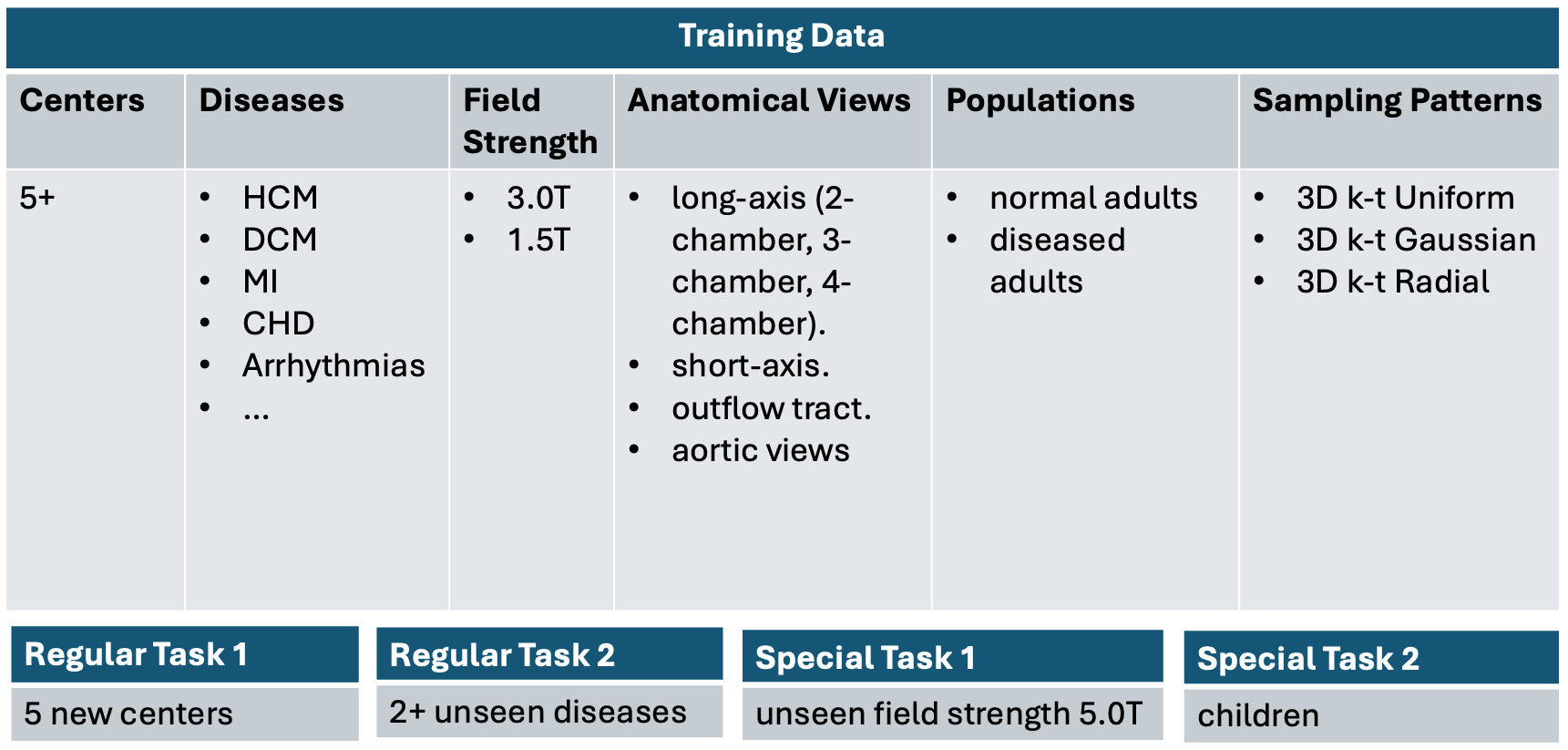

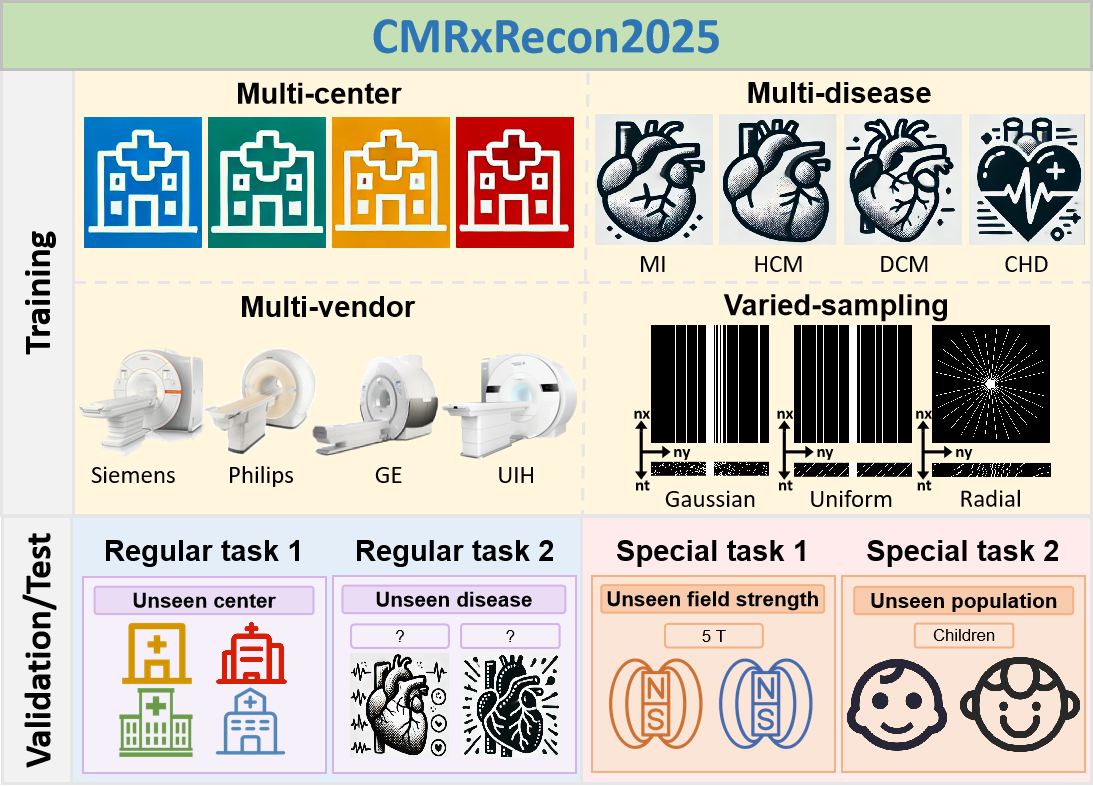

The CMRxRecon2025 challenge includes two regular tasks (announced during MICCAI anual meeting) and two special tasks (announced during SCMR anual meeting) . The tasks are awarded independently, so each team can choose to participate any one of them. For each task, participants can submit one model each (which can be four different models, or they can submit just one model, but they must submit it separately for each task). Please note that for the four tasks, the training dataset we provide is the same; however, the validation and test datasets are different for each of the four tasks.

To evaluate the model's generalization performance

across diverse centers and scanners (regular task 1),

diseases (regular task 2), magnetic fields (special task 1), and populations (special task 2).

This task primarily focuses on addressing the issue of declining generalization performance between multiple

centers. Participants are required to train the reconstruction model on the training dataset and achieve

good multi-contrast cardiac image reconstruction results on the validation and test datasets. It is

important to note that for this task, we will include data from two entirely new centers in the validation

set (not present in the training set), and the test set will contain data from five entirely new centers

(not present in the training set, including the two centers that appeared in the validation set).

This task primarily focuses on evaluating the reliability of the model in applications involving different

cardiovascular diseases. Participants are required to train the reconstruction model on the training dataset

and achieve good performance in disease applications on the validation and test datasets. It is important to

note that for this task, we will include data for two diseases that have not appeared in the training set in

the validation set, and the test set will contain data for five diseases that have not appeared in the

training set (including the two diseases that appeared in the validation set).

Please note that to ensure the model training process is not biased by the type of disease, we will not

disclose the disease information for each data point in the training and validation dataset.

This task primarily focuses on addressing the issue of declining reconstruction generalization performance

under different magnetic field strengths, especially those not included in the training data. Participants

are required to train the reconstruction model on the training dataset (mainly consisting of 1.5T and 3.0T)

and achieve good multi-contrast cardiac image reconstruction results on the validation and test datasets

(5.0T).

This task primarily focuses on addressing application issues in pediatric cardiac imaging. Participants are

required to train the reconstruction model on the training dataset (mainly consisting of adults over 20

years old) and achieve good multi-contrast cardiac image reconstruction results on the validation and test

datasets (minors under 18 years old). Please note that to ensure the model training process is not biased by

age information, we will not disclose the age of each data point in the training dataset.

For the four tasks, we will use SSIM, PSNR, and NMSE as objective evaluation metrics, with SSIM being the

primary metric for ranking. During the test phase, we will conduct an additional round of scoring (1 to 5

points) by experienced radiologists for the top 5 teams in each task, and subsequent evaluations will be

based on these subjective scores. The scoring will cover three aspects: image quality, image artifacts, and

clinical utility. The final ranking will be determined by the average of the objective and subjective score

rankings, and this average ranking will serve as the final competition ranking.

Please note that during the validation phase, we will only conduct objective scoring and will not involve

radiologist scoring.

1.When evaluating SSIM, we will narrow down the assessment field-of-view to the region where the heart

is located, to avoid interference from the background area.

2. Regarding the sampling patterns and accelerations, all paired data are assigned equal weight when

calculating the final ranking metrics.

3. Participating teams are required to submit docker containers and process all the cases in the test

set on our server. For the cases without valid output, we will assign it to the lowest value of metric.

The top 5 winners in each task will receive monetary awards. The bonus distribution plan is shown in the table below.

| Winner | Monetary Awards | Certificate | Oral Presentation | Summary Paper Involved |

| Top 1 | $600 |  |

|

|

| Top 2 | $400 |  |

|

|

| Top 3 | $300 |  |

|

|

| Top 4 | $200 |  |

||

| Top 5 | $100 |  |

All submissions will be reported in the leaderboard. Each participating team can engage in any tasks or all four tasks. Prize-winning methods in Regular Task 1 and Task 2 will be announced publicly as part of a scientific session at the MICCAI annual meeting. Prize-winning methods in Special Task 1 and Task 2 will be announced publicly as part of a scientific session at the SCMR annual meeting.

Validation submission tutorial: https://www.synapse.org/#!Synapse:syn59814210/wiki/628454

Test submission tutorial: https://www.synapse.org/#!Synapse:syn59814210/wiki/628454

Hosted on Synapse platform.

Visit

the Synapse Project Page

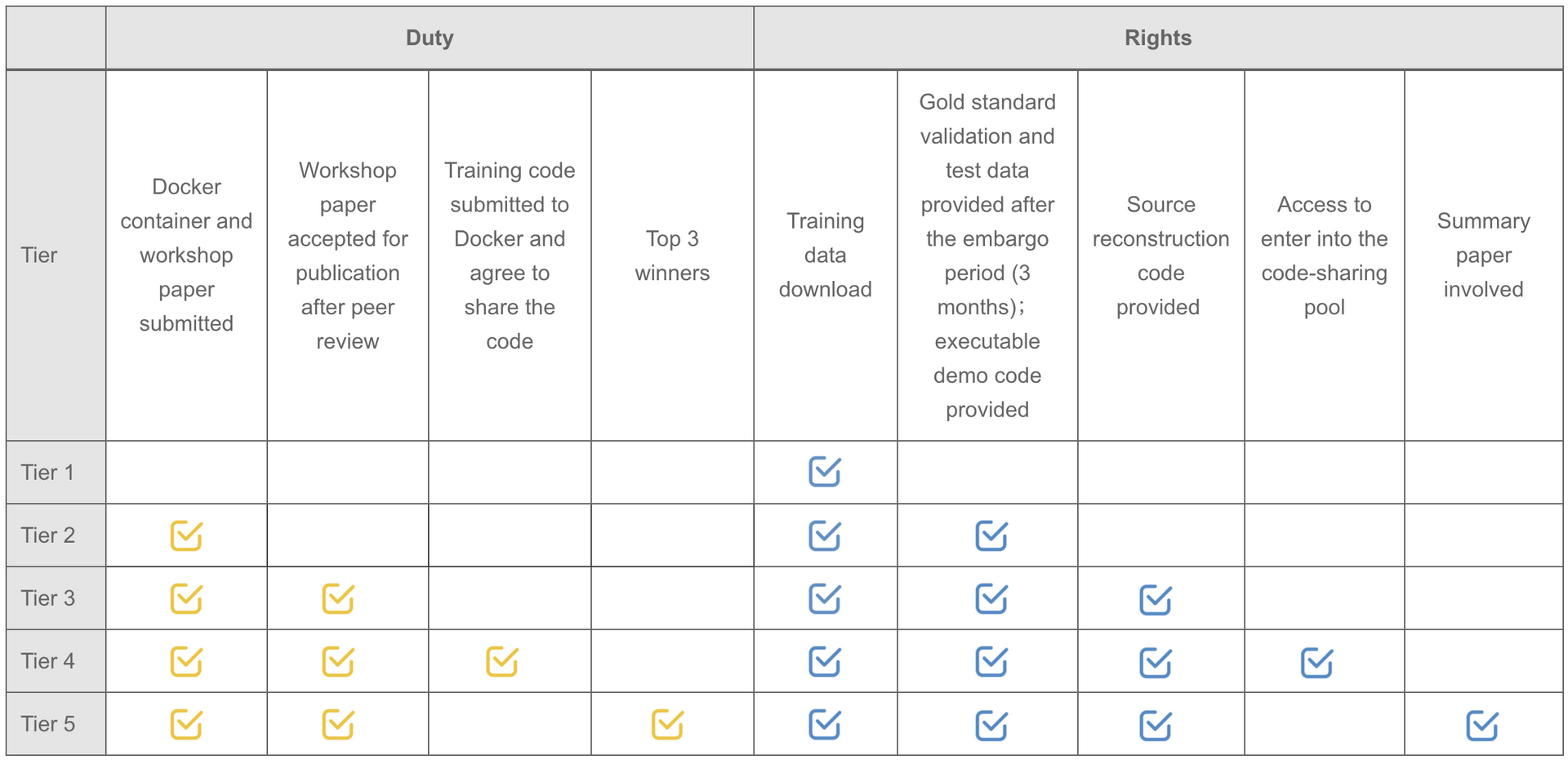

Note: Participants are not required to upload the complete training code. But teams willing to upload the original training code will be automatically entered into the code-sharing pool.

The schedule of the challenge is as follows. All deadlines are Pacific Standard Time (PST +11:59).

| [Mar. 1, 2025] | Website opens for registration |

| [Mar. 10, 2025] | Release training data |

| [Apr. 15, 2025] | Release validation data |

| [May. 15, 2025] | Submission system opens for validation for regular tasks |

| [Jun. 10, 2025] | Submission system opens for validation for special tasks |

| [Jun. 20, 2025] | Submission system opens for testing |

| [Jul. 30, 2025] | STACOM paper submission deadline |

| [Aug. 20, 2025] | Testing docker submission deadline for regular tasks |

| [Sep. 27, 2025] | Release final results of regular tasks during the MICCAI annual meeting |

| [Oct. 30, 2025] | Testing docker submission deadline for special tasks |

| [Feb. 4-7, 2026] | Release final results of special tasks during the SCMR annual meeting |

1) It should be restricted to the data provided by the previous CMRxRecon challenge as well as data from the

'fastMRI' challenge (the most related public dataset), under the terms and conditions associated with the

data usage.

2) For each task, participants are allowed to train only one

model to reconstruct various images at the

aforementioned different undersampling scenarios.

The submission instructions will be released on the Synapse platform.

created with

Website Builder Software .